Redefining Data Strategy: The Comprehensive Azure to Microsoft Fabric Migration Roadmap

Table of Contents

Redefining Data Strategy: The Comprehensive Azure to Microsoft Fabric Migration Roadmap

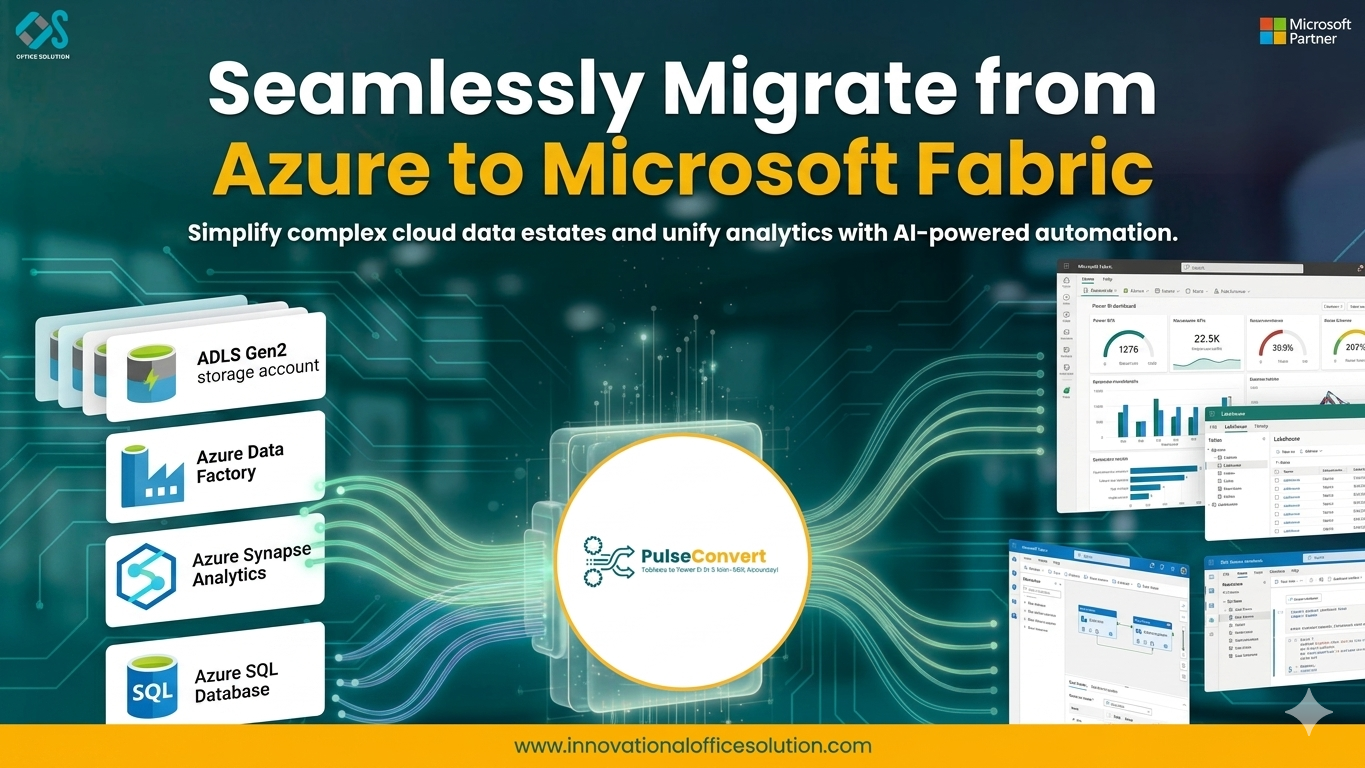

Modern enterprises are moving away from the "some assembly required" model of cloud data management. For those who have built their systems on Azure, the path forward is clear. Migrating from Azure to Microsoft Fabric is the most effective way to modernize your data stack, reduce complexity, and prepare for the era of generative AI. By choosing to move Azure to Fabric, you are opting for a platform that prioritizes integration over isolation, enabling your team to focus on what matters most: the data itself.

Why Every Azure Customer is Looking at Fabric

The momentum behind the azure to microsoft fabric migration is driven by the desire for a unified developer experience. Microsoft Fabric brings together all the tools needed for the data lifecycle into a single UI. No more jumping between different portals to manage ingestion, transformation, and visualization. This "Single Pane of Glass" approach is a central theme in the azure to microsoft fabric migration blog, which serves as a roadmap for businesses ready to shed the burden of legacy PaaS management.

The Power of OneLake and Open Data Formats

The dedication to open standards is a pillar of the Azure to Fabric transition. Delta Parquet, an open-source format that guarantees you are never trapped into a proprietary vendor, is used to store all data in Fabric. This is made possible by the unified storage layer offered by OneLake. By migrating from Azure to Microsoft Fabric, you ensure that your data is accessible to any tool that supports the Delta format, providing long-term flexibility and future-proofing your data investment.

Rethinking Data Engineering: From Pipelines to Lakehouses

The azure to microsoft fabric migration transforms the way data engineering is performed. In the legacy Azure model, you might use ADF for movement and Synapse for transformation. In Fabric, these are combined within the Lakehouse experience. You can use low-code Data Factory activities for simple moves and Spark notebooks for complex logic—all writing to the same Delta tables. This streamlined workflow is a key reason why many companies are following the guidance found in comprehensive Azure to Fabric migration guides.

Scaling with Ease: The Capacity Unit Model

One of the most significant changes when moving Azure to Fabric is how compute power is managed. Instead of provisioning specific sizes for individual databases or Spark clusters, you purchase a pool of Capacity Units (CUs). These CUs are shared across the entire tenant. If the data science team is running a heavy training model, they can utilize more of the pool; when they finish, those resources are immediately available for the BI team's reports. This fluid resource allocation is a major operational benefit discussed by experts in the field here.

Real-Time Analytics and the Kusto Engine in Fabric

Business moves faster than ever, and batch processing is no longer enough. As part of an azure to microsoft fabric migration, organizations gain access to high-performance real-time analytics powered by the Kusto engine. This allows you to ingest and query millions of events per second with sub-second latency. Whether you are monitoring IoT devices or tracking web traffic, having this capability integrated directly into your data lakehouse ensures that your real-time insights are never siloed from your historical data.

Bridging the Gap for Data Scientists

For many organizations, data science has traditionally been a separate "island" of activity. Migrating from Azure to Microsoft Fabric changes this by providing a dedicated Data Science experience that sits right on top of OneLake. Data scientists can build, train, and deploy machine learning models using the exact same data that the engineering team is processing. There is no more data movement or complex environment synchronization required, which significantly accelerates the path from experiment to production.

The Role of Purview in Unified Governance

The dedication to open standards is a pillar of the Azure to Fabric transition. Delta Parquet, an open-source format that guarantees you are never trapped into a proprietary vendor, is used to store all data in Fabric. This is made possible by the unified storage layer offered by OneLake. Your data will be accessible to any tool that supports the Delta format if you move from Azure to Microsoft Fabric, giving you long-term flexibility and future-proofing your data investment.

Technical Validation and Performance Benchmarking

A successful azure to microsoft fabric migration requires more than just moving code; it requires proving that the new system is superior. Performance benchmarking is a critical part of the validation process. You should compare the execution times of your most complex Synapse queries against their Fabric equivalents. In many cases, the optimized Spark and SQL engines in Fabric provide significant speed improvements. This data-driven approach to validation ensures that the business sees an immediate tangible benefit from the migration effort.

Strategic Planning and the Roadmap to Success

No migration of this scale should be attempted without a clear plan. An effective azure to fabric roadmap includes four key phases: Assessment, Foundation Building, Workload Migration, and Optimization. By following this structured approach, you can manage risk and ensure a smooth transition for your users

Utilizing a free trial of available migration tools can help you understand the specific technical requirements of your environment early in the process.

Embracing the Future of Analytics

The move from Azure to Fabric is a move toward a simpler, more powerful future. By migrating from Azure to Microsoft Fabric, you are giving your team the tools they need to innovate at the speed of business. The complexities of the past are replaced by the integrated, SaaS-based efficiency of the present. If you are ready to take the next step in your data journey and want to ensure a professional, risk-free transition, the contact us page is the perfect place to start the conversation.

Frequently Asked Questions

Q.Is Microsoft Fabric just a rebranding of Synapse?

A.No. While it incorporates some Synapse technology, Fabric is a new, SaaS-based architecture built from the ground up for OneLake. It offers a much higher level of integration and a completely different management model than legacy Synapse PaaS.

Q.Can I still use SQL Server Management Studio (SSMS) with Fabric?

A.Yes. The Fabric Data Warehouse and Lakehouse SQL endpoints are fully compatible with SSMS and other standard SQL tools, allowing your team to use the interface they are most comfortable with.

Q.What is the "OneLake File Explorer"?

A.The OneLake File Explorer is a Windows application that allows you to interact with your data in OneLake just like you would with files in OneDrive or File Explorer, making it incredibly easy for users to upload and manage data.

Q.How does security work for "Shortcuts"?

A.Shortcuts respect the security of the source system. If a user doesn't have permission to the source ADLS account, they won't be able to access the data through the Fabric Shortcut either, ensuring your security perimeter remains intact.

Q.Can I run Fabric on-premises?

A.Microsoft Fabric is a cloud-native SaaS platform and is not available for on-premises installation. However, you can use the On-Premises Data Gateway to connect your local data sources to the Fabric cloud environment.

Q.How do I choose between a Lakehouse and a Data Warehouse in Fabric?

A.A Lakehouse is ideal for Spark-heavy workloads and unstructured data, while a Data Warehouse is best for teams that prefer a T-SQL-first approach and highly structured data. Because both store data in Delta format in OneLake, they can easily coexist and share data.

Ready to Modernize Your Data Stack?

Explore our specialized Azure to Microsoft Fabric migration services or get a custom roadmap for your organization.