Informatica to Databricks Migration

The transition from Informatica to Databricks represents a fundamental shift from legacy, server-bound ETL (Extract, Transform, Load) to a modern, scalable Data Lakehouse architecture. As enterprises grapple with increasing data volumes and the need for real-time AI capabilities, the Informatica to Databricks migration has become a strategic priority for CTOs and Data Architects seeking to lower Total Cost of Ownership (TCO) while accelerating innovation.

1. The Strategic Imperative: Why Migrate from Informatica to Databricks?

For decades, Informatica has been the industry standard for enterprise data integration. However, the rise of the Databricks Lakehouse has redefined what is possible in the data space. Understanding the core differences is essential for a successful migration strategy.

Legacy ETL vs. The Modern Lakehouse

Informatica typically operates on a "hub-and-spoke" model, often requiring proprietary hardware or heavy middleware. Processing occurs within the Informatica engine, which can create bottlenecks as data scales.

Databricks, powered by Apache Spark, leverages a decoupled storage and compute architecture. It combines the best elements of data lakes (scale and flexibility) with data warehouses (performance and reliability).

- Source Reference (Informatica): Learn More

- Source Reference (Databricks): Learn More

Key Technical Comparisons

| Feature | Informatica (PowerCenter/IICS) | Databricks (Lakehouse) |

|---|---|---|

| Architecture | Traditional ETL / Proprietary Engine | Unified Lakehouse (Delta Lake) |

| Processing Power | Server-bound / Rigid Scaling | Distributed Spark / Auto-scaling |

| Language Support | GUI-based / Proprietary Transformation | SQL, Python, Scala, R |

| AI/ML Integration | Limited / Add-on modules | Native integration with MLflow |

| Cost Model | License-heavy / Predictable | Consumption-based / Performance-tuned |

2. Technical Nuances of the Migration

A successful Informatica to Databricks migration is not a simple "lift and shift." It involves translating metadata-driven workflows into code-based or SQL-driven pipelines.

From Mappings to Notebooks

In Informatica, logic is stored in XML-based mappings. In Databricks, this logic is modernized into Delta Live Tables (DLT) or PySpark notebooks.

- Mapping Logic: Informatica’s "Source Qualifiers" and "Expression Transformations" must be re-engineered into Spark SQL queries or DataFrame operations.

- UDFs (User Defined Functions): Proprietary Informatica functions must be translated into Python or Scala UDFs to maintain data integrity.

Handling Metadata and Orchestration

One of the biggest challenges in an Informatica to Databricks project is the orchestration layer. While Informatica uses Workflows and Task Developers, Databricks utilizes Databricks Workflows or external orchestrators like Azure Data Factory (ADF) or Airflow. Migrating these requires a deep understanding of dependency mapping and error-handling logic.

3. The 5-Step Professional Migration Framework

We utilize a battle-tested framework to ensure that your Informatica to Databricks migration is seamless, secure, and optimized for high-performance analytics.

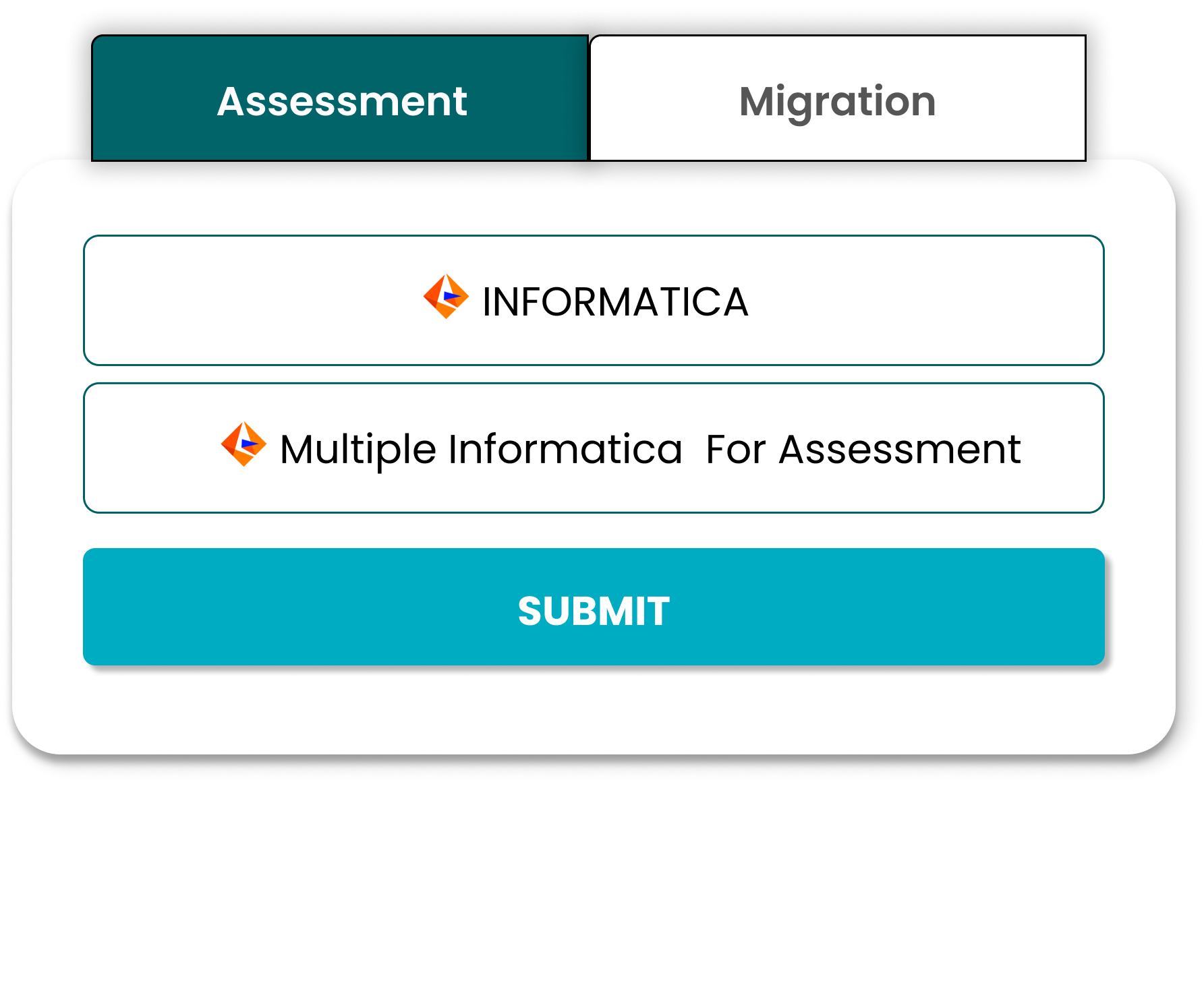

Phase 1: Assessment and Discovery

We begin by auditing your existing Informatica repository.

- Redundancy Check: Identify unused workflows to avoid migrating unnecessary data.

- Complexity Scoring: Categorize mappings into Simple, Medium, and Complex.

- Data Lineage Mapping: Ensure downstream tools remain unaffected.

Phase 2: Environment and Security Setup

Security is critical. We configure the Databricks workspace using Unity Catalog to provide fine-grained governance across data assets, replacing legacy security models.

Phase 3: Logic Conversion and Transformation

This is the core of the Informatica to Databricks transition.

- Automated Conversion: Convert Informatica XMLs into Spark-compatible code.

- Manual Re-engineering: Rewrite complex logic to fully utilize Spark’s distributed processing.

Phase 4: Data Validation and Reconciliation

Automated testing ensures outputs from legacy workflows match new Databricks pipelines, maintaining 100% data accuracy and preventing discrepancies.

Phase 5: Performance Tuning and Optimization

We optimize Spark clusters using advanced engines like Photon to ensure faster, cost-efficient performance compared to legacy environments.

4. Addressing Architect-Level Challenges

Hybrid Cloud and Multi-Cloud Architectures

Many enterprises are in a state of flux, running on-premises Informatica instances while leveraging AWS, Azure, or GCP. Databricks’ cloud-agnostic nature makes it an ideal platform for hybrid strategies. It is important to ensure that data flows securely through On-Premises Data Gateways or Private Links during migration.

Real-Time Data Processing

Informatica PowerCenter is primarily a batch-processing tool. By migrating to Databricks, organizations can unlock Structured Streaming capabilities. This enables a shift from scheduled batch updates to real-time insights, allowing faster and more informed decision-making.

5. Common Pitfalls to Avoid

- Over-provisioning Clusters: Avoid mirroring legacy server specs. Use auto-scaling to optimize costs.

- Ignoring Delta Lake: Moving data alone is not enough. Use Delta Lake for ACID transactions and reliability.

- Lack of Governance: Ensure Unity Catalog is implemented early to prevent data management issues.

6. Business Value: The ROI of Informatica to Databricks

Moving to a unified Informatica to Databricks architecture delivers measurable business benefits:

- Reduced Licensing Costs: Eliminate high maintenance fees associated with legacy ETL tools.

- Increased Engineering Velocity: Python and SQL enable faster development compared to proprietary tools.

- Future-Proofing for AI: Databricks supports modern AI and LLM workloads that legacy systems cannot.

7. Conclusion: Modernize with Confidence

The Informatica to Databricks migration is more than a technical upgrade; it is an evolution of your data culture. By moving to a Lakehouse architecture, you empower your teams to work on a single, high-performance platform.

At Innovational Office Solution, we bring decades of experience in both legacy ETL and modern cloud analytics, ensuring your migration is delivered on time, within budget, and without data loss.

Ready to Modernize Your Data Stack?

Transitioning from Informatica doesn't have to be a risk. Contact our migration architects today for a comprehensive assessment of your ETL environment.

Schedule a Technical Consultation:

Start Your MigrationFrequently Asked Questions (FAQ)

Q: Can I keep my reports on-premises after migrating?

A: Yes. You can use Power BI Report Server for an on-premises footprint, though the full feature set (AI dashboards, cloud collaboration) is optimized for the Power BI Service.

Q: Does Power BI support all SSRS data sources?

A: Power BI supports a wider range of data sources than SSRS, including cloud APIs, NoSQL databases, and SaaS platforms like Salesforce, along with traditional SQL sources.

Q: How long does a typical migration take?

A: A migration timeline depends on the number of reports and complexity. A standard migration of around 50 reports typically takes 4 to 8 weeks.

Why Migrate Informatica to Databricks?

Unlock the full potential of your data with the Databricks Lakehouse platform.

Lower Licensing Costs

Reduce expensive legacy ETL tool licensing fees by moving to a unified, open analytics platform.

Spark-Based Scalability

Leverage the limitless scalability of Apache Spark for high-volume data processing and transformations.

Lakehouse Architecture

Combine the best elements of data lakes and data warehouses for simplified data management and governance.

AI & ML Integration

Seamlessly integrate ETL pipelines with advanced machine learning and AI capabilities.

Our Informatica to Databricks Migration Strategy

Step 1 – Workflow Assessment

- Comprehensive mapping inventory of all Informatica workflows

- Analysis of complex transformations and logic

- Review of scheduling dependencies

Step 2 – Conversion to Spark

- PySpark development to replace Informatica logic

- Implementation of Delta Lake for data reliability

- Setting up job orchestration in Databricks Workflows

Step 3 – Validation

- Rigorous data reconciliation between old and new systems

- Performance optimization for Spark jobs

Business Impact

Cost Reduction

Significantly lower total cost of ownership by modernizing your data stack.

Performance Improvement

Accelerate data processing times with the speed of Databricks Photon engine.

Advanced Analytics Readiness

Prepare your data for predictive analytics and data science initiatives.

Start Your Informatica to Databricks Migration

Modernize your ETL pipelines with Pulse Convert.