The Strategic Transition: Azure to Microsoft Fabric Migration Guide for Modern Enterprises

Table of Contents

The Strategic Transition: Azure to Microsoft Fabric Migration Guide for Modern Enterprises

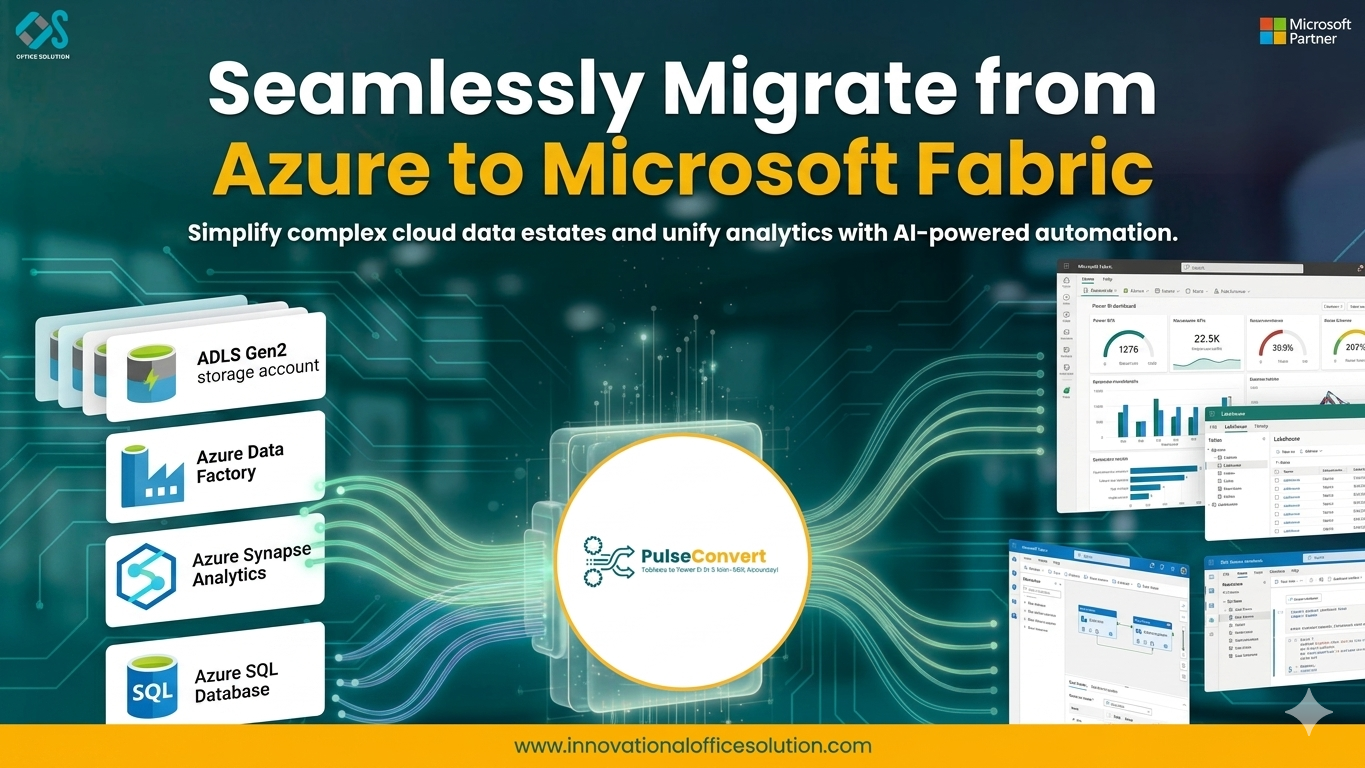

The architectural paradigm of data management is undergoing a significant transformation. For years, organizations have painstakingly assembled data estates using disparate services within Azure. While these individual tools are robust, the operational burden of maintaining the "glue" between them—the complex networking, fragmented security, and constant data movement—has reached a tipping point. An azure to microsoft fabric migration is the solution to this complexity. It is not merely a version update; it is a fundamental shift from managing infrastructure to managing insights. By moving Azure to Fabric, enterprises can finally realize the dream of a unified, SaaS-based data ecosystem where every persona, from the data engineer to the business analyst, works within a single, cohesive environment.

The Business Imperative for Migrating from Azure to Microsoft Fabric

The primary catalyst for migrating from Azure to Microsoft Fabric is the elimination of the "integration tax." In a traditional Azure data stack, teams often find themselves managing Azure Data Factory for ingestion, Azure Synapse for processing, and Power BI for reporting. Each service requires its own capacity, security configuration, and monitoring. This fragmentation slows down the time-to-value and increases costs. By transitioning Azure to Fabric, you move into a world where OneLake serves as the singular data foundation, and compute engines are integrated by design. This roadmap is deeply explored in the Azure to Microsoft Fabric migration guide, which emphasizes how this consolidation directly translates into increased business agility and reduced total cost of ownership.

OneLake: The "OneDrive for Data" at the Core of the Migration

OneLake is at the center of every trip from Azure to Fabric. Data is dispersed throughout several ADLS Gen2 storage accounts in many legacy Azure systems, which results in redundant copies and uneven governance. For the whole company, OneLake offers a single, logical data lake. Each tenant has a single OneLake, which is read and written to in the open Delta Parquet format by all Fabric compute engines, including SQL, Spark, and Kusto. This transparency, which removes the need to transfer data between several services in order to carry out a particular kind of analysis, is a revolutionary advantage of migrating from Azure to Microsoft Fabric.

Architectural Evolution: Moving from PaaS to SaaS Simplicity

A technical cornerstone of the azure to microsoft fabric migration is the shift from Platform-as-a-Service (PaaS) to Software-as-a-Service (SaaS). In the traditional Azure model, developers are responsible for provisioning, scaling, and patching individual clusters and workspaces. Microsoft Fabric abstracts this complexity. The platform manages the underlying infrastructure automatically, allowing your data professionals to spend their time building logic and uncovering insights rather than managing hardware. This architectural simplicity is a core theme in professional overviews of migrating from Azure stacks to Fabric.

Auditing Your Existing Azure Inventory for a Smooth Transition

A successful Azure to Fabric migration starts with a comprehensive audit of your current data estate. This involves mapping every active Data Factory pipeline, every Synapse SQL script, and every Databricks notebook currently in production. Understanding the complex web of dependencies between these components is vital to avoiding downtime. A well-structured Azure to Microsoft Fabric migration guide suggests that organizations should categorize their workloads based on complexity and business priority. Identifying these "low-hanging fruit" workloads allows for early successes that build stakeholder confidence in the new platform.

Logic Conversion: Mapping Synapse and Data Factory to Fabric

The most labor-intensive phase of migrating from Azure to Microsoft Fabric is the conversion of existing ETL and analytical logic. While Azure Data Factory pipelines share a lineage with Fabric Data Factory, there are subtle differences in activity support and connector availability that must be addressed. Similarly, your Synapse SQL views and stored procedures must be adapted for the Fabric Data Warehouse or Lakehouse SQL endpoint. This is not just a migration; it is an opportunity to refactor and optimize. By utilizing specialized migration services, organizations can ensure their code follows modern best practices for the Lakehouse era.

Security and Governance: Unifying the Fragmented Perimeter

In a legacy Azure architecture, security is frequently a complicated puzzle combining Service Principals, Managed Identities, and distinct RBAC responsibilities across several services. Azure to Microsoft Fabric migration unifies security under a single, centralized paradigm. End-to-end data lineage and sensitivity labeling that remains with the data independent of whatever compute engine is accessing it are made possible by integration with Microsoft Entra ID and Purview. One of the main reasons highly regulated companies are moving more quickly from Azure to Fabric is this simplified approach to governance.

Validation and Parallel Testing Strategies

Trust in data is non-negotiable. During the Azure to Fabric transition, a rigorous validation phase is required. Parallel testing involves running your existing Azure pipelines alongside your new Fabric pipelines for several cycles to ensure that the outputs match exactly. This is the time to verify data types, precision, and latency. If performance issues arise, the elasticity of Fabric's Capacity Units (CUs) allows you to scale compute resources instantly to meet demand. Testing should also extend to the end-user layer, ensuring that Power BI reports reflect the correct data with improved performance.

Accelerating the Journey with Automation and Free Trials

Manual migration of a large data estate is time-consuming and prone to human error. To mitigate these risks, organizations should leverage automated migration utilities that can parse pipeline definitions and SQL scripts to generate Fabric-native versions. For teams that want to explore these capabilities without initial commitment, a free trial of migration tools can provide a valuable proof-of-concept. This allows you to see exactly how your specific Azure Data Factory pipelines will function in the Fabric environment before the full-scale migration begins.

Change Management: Preparing the Team for a Unified Workspace

There is a major cultural shift that coincides with the technical transition from Azure to Fabric. Roles are not clearly defined in Microsoft Fabric. In one workspace, data scientists, data engineers, and BI analysts work together. This calls for a fresh strategy for upskilling and change management. The integrated nature of the platform should be the main emphasis of training; analysts should be shown how to use Direct Lake mode for lightning-fast reporting, and engineers should be taught how to use notebooks for Lakehouse development. To fully realize the potential of the unified analytics platform, it is imperative to cultivate this collaborative attitude.

Cutover, Stabilization, and Legacy Retirement

The final step in the Azure to Microsoft Fabric migration is the official cutover. This involves repointing downstream applications to the new Fabric endpoints and scheduling a final data sync. Following the cutover, a stabilization period is necessary to monitor system performance and address any unforeseen issues. Once the system is confirmed stable, the legacy Azure services can be decommissioned. This leads to immediate operational savings and a much cleaner technical environment. For organizations seeking expert guidance on these final, critical steps, visiting the contact us page ensures a partnership that leads to long-term success.

Frequently Asked Questions

Q.What is the difference between an Azure Lakehouse and a Fabric Lakehouse?

A.While both use Delta Lake, a Fabric Lakehouse is a SaaS-managed artifact that automatically integrates with OneLake and provides a SQL Analytics endpoint for T-SQL querying without any manual cluster configuration.

Q.Can I keep using Azure Databricks after moving to Fabric?

A.Yes. Fabric is an open platform. You can continue to use Azure Databricks and have it read from and write to OneLake using the Delta format, allowing you to choose the best engine for each specific task.

Q.How does "Shortcuts" help in the migration?

A.Shortcuts allow you to virtually include data from ADLS Gen2 or even AWS S3 into your Fabric Lakehouse without moving it. This allows for a "zero-copy" migration strategy where you can start using Fabric features immediately on your existing data.

Q.Is Microsoft Fabric more expensive than separate Azure services?

A.Generally, Fabric can be more cost-effective because of its unified capacity model. Instead of paying for idle time on multiple separate clusters, you use a shared pool of Capacity Units that dynamically allocates resources where they are needed most.

Q.How does the Direct Lake mode in Power BI work?

A.Direct Lake mode allows Power BI to read Delta Parquet files directly from OneLake storage. This provides the performance of an "Import" model with the real-time data freshness of "DirectQuery," eliminating the need for time-consuming dataset refreshes.

Q.What happens to my Azure Synapse Dedicated SQL Pools?

A.Your dedicated pools are evolved into the Fabric Data Warehouse. While the underlying engine is similar, the Fabric version is more integrated, uses OneLake as its storage, and eliminates many of the management overheads associated with Synapse.

Ready to Migrate from Azure to Microsoft Fabric?

Explore our specialized migration services or connect with our team for a tailored roadmap for your enterprise data estate.